Seminar: Learning with Limited Supervision

Prof. Dr. Abhinav Valada

Co-Organizers:

Rohit Mohan, Dr. Daniele Cattaneo, Julia Hindel, Nick Heppert, Martin Büchner, Adrian Röfer

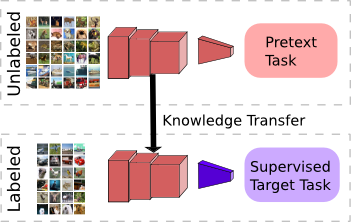

Deep learning has become a key enabler of real world autonomous systems. While classical supervised learning methods typically rely on ground truth information, the area autonomous robotics requires less dependence on manual supervision. The research directions of semi- and self-supervised learning instead aim to learn representations without explicit and potentially even manual supervision. Especially, the domain of robot learning requires scaling to large amounts of unlabeled data in a lifelong manner. Self- and semi-supervised learning already have had a significant impact on fields such as perception, state estimation, control, or graph representation learning, thereby making important progress in object manipulation, scene understanding, visual recognition, object tracking, and learning-based control, amongst others. In this seminar, we will study a selection of state-of-the-art works that propose deep learning techniques for tackling various challenges in autonomous systems. In particular, we will analyze contributions in architecture design and learning paradigms in the areas of computer vision, reinforcement learning, imitation learning, and deep graph learning.

Course Information

|

Details:

|

Course Number: 11LE13S-7310-M

Places: 12

Location: Georges-Köhler-Allee 80, Room Number 00.021

|

|

Course Program:

|

Introduction: 19/04/2023 @ 11:00

How to make a presentation: 22/06/2023 @ 14:00

Block Seminar: 24/07/2023 @ 09:00

|

|

Evaluation Program:

|

Abstract Due Date: 26/06/2023 @ 23:59 CEST

Seminar Presentation: 24/07/2023

Summary Due Date: 04/08/2023 @ 23:59 CEST

|

|

Requirements:

|

Basic knowledge of Deep Learning or Reinforcement Learning

|

|

Remarks:

|

Topics will be assigned for the seminar via preference voting. If there are more interested students than places, places will be assigned based on priority suggestions of the HisInOne system and motivation (tested by asking for a short summary of the preferred paper). The date of registration is irrelevant. In particular, we want to avoid that students grab a topic and then leave the seminar. Please have a coarse look at all available papers to make an informed decision before you commit.

|

Course Material

|

Slides:

|

Lecture 1: Introduction

Lecture 2: How to Make a Presentation |

|

Templates:

|

Additional Information

Enrollment Procedure

- Enroll through HISinOne, the course number is 11LE13S-7310-M.

- The registration period for the seminars are from 17/04/2023 to 24/04/2023.

- Attend the introductory session on 19/04/2023.

- Select three papers from the topic list (see below) and complete this form by 24/04/2023.

- Places will be assigned based on the priority suggestions of HISInOne and the student's motivation on 25/05/2023.

Evaluation Details

- Students are expected to write an abstract, prepare a 20-minute long presentation and draft a summary.

- The abstract should not exceed two pages and is due on 26/06/2023

- The seminar will be held as a "Blockseminar" on 24/07/2023

- The slides of your presentation should be discussed with the supervisor two weeks before the Blockseminar.

- The summary should not exceed seven pages (excluding bibliography and images) and is due on 04/08/2023. Significantly longer summaries will not be accepted.

- Ensure you cite all work you use including images and illustrations. Where possible, try to use your own illustrations.

- The final grade is based on the oral presentation, the written abstract, the summary, and participation in the blockseminar.

What should the Summary contain?

The summary should address the following questions:

- What is the paper's main contribution and why is it important?

- How does it relate to other techniques in the literature?

- What are the strengths and weaknesses of the paper?

- What would be some interesting follow-up work? Can you suggest any possible improvements in the proposed methods? Are there any further interesting applications that the authors might have overlooked?

Graded Component Submission

- Save your document as a PDF and directly submit it to your topic supervisor via email.

- The filename should be in the format "FirstName_LastName_X.pdf" where X is the evaluation component (Abstract / Summary / Presentation).

Topics

- Task and Motion Planning with Large Language Models for Object Rearrangement

Supervisor: Adrian Röfer - Generative Agents: Interactive Simulacra of Human Behavior

Supervisor: Adrian Röfer - Grounded Decoding: Guiding Text Generation with Grounded Models for Robot Control

Supervisor: Adrian Röfer - RSF: Optimizing Rigid Scene Flow From 3D Point Clouds Without Labels

Supervisor: Daniele Cattaneo - Unsupervised 4D LiDAR Moving Object Segmentation in Stationary Settings with Multivariate Occupancy Time Series

Supervisor: Daniele Cattaneo - SALAD : Source-free Active Label-Agnostic Domain Adaptation for Classification, Segmentation and Detection

Supervisor: Daniele Cattaneo - Self-supervised Monocular Multi-robot Relative Localization with Efficient Deep Neural Networks

Supervisor: Julia Hindel - Self-Supervised Camera Self-Calibration from Video

Supervisor: Julia Hindel - Cross-Domain Correlation Distillation for Unsupervised Domain Adaptation in Nighttime Semantic Segmentation

Supervisor: Julia Hindel - Transformers are adaptable task planners

Supervisor: Martin Büchner - LM-Nav: Robotic Navigation with Large Pre-Trained Models of Language, Vision, and Action

Supervisor: Martin Büchner - R3M: A Universal Visual Representation for Robot Manipulation

Supervisor: Martin Büchner - Learning by Watching: Physical Imitation of Manipulation Skills from Human Videos

Supervisor: Nick Heppert - MimicPlay: Long-Horizon Imitation Learning by Watching Human Play

Supervisor: Nick Heppert - Self-Supervised Geometric Correspondence for Category-Level 6D Object Pose Estimation in the Wild

Supervisor: Nick Heppert - MonoDVPS: A Self-Supervised Monocular Depth Estimation Approach to Depth-aware Video Panoptic Segmentation

Supervisor: Rohit Mohan - ConfMix: Unsupervised Domain Adaptation for Object Detection via Confidence-based Mixing

Supervisor: Rohit Mohan - Multi-Frame Self-Supervised Depth with Transformers

Supervisor: Rohit Mohan

Questions?

If you have any questions, please direct them to Rohit Mohan before the topic allotment, and to your supervisor after you have received your topic.